4 Mar 20269 minute read

Anthropic brings evals to skill-creator. Here’s why that’s a big deal

4 Mar 20269 minute read

Anthropic's skill-creator is the primary way developers create skill. If you try it out today, you'll find there's something new within it - creating and executing evals for your skill.

This isn't just an incremental capability - it's a big step in treating skills and context as a piece of software, and one that requires testing to get right. It's very much worth your attention.

Why evaluating context matters?

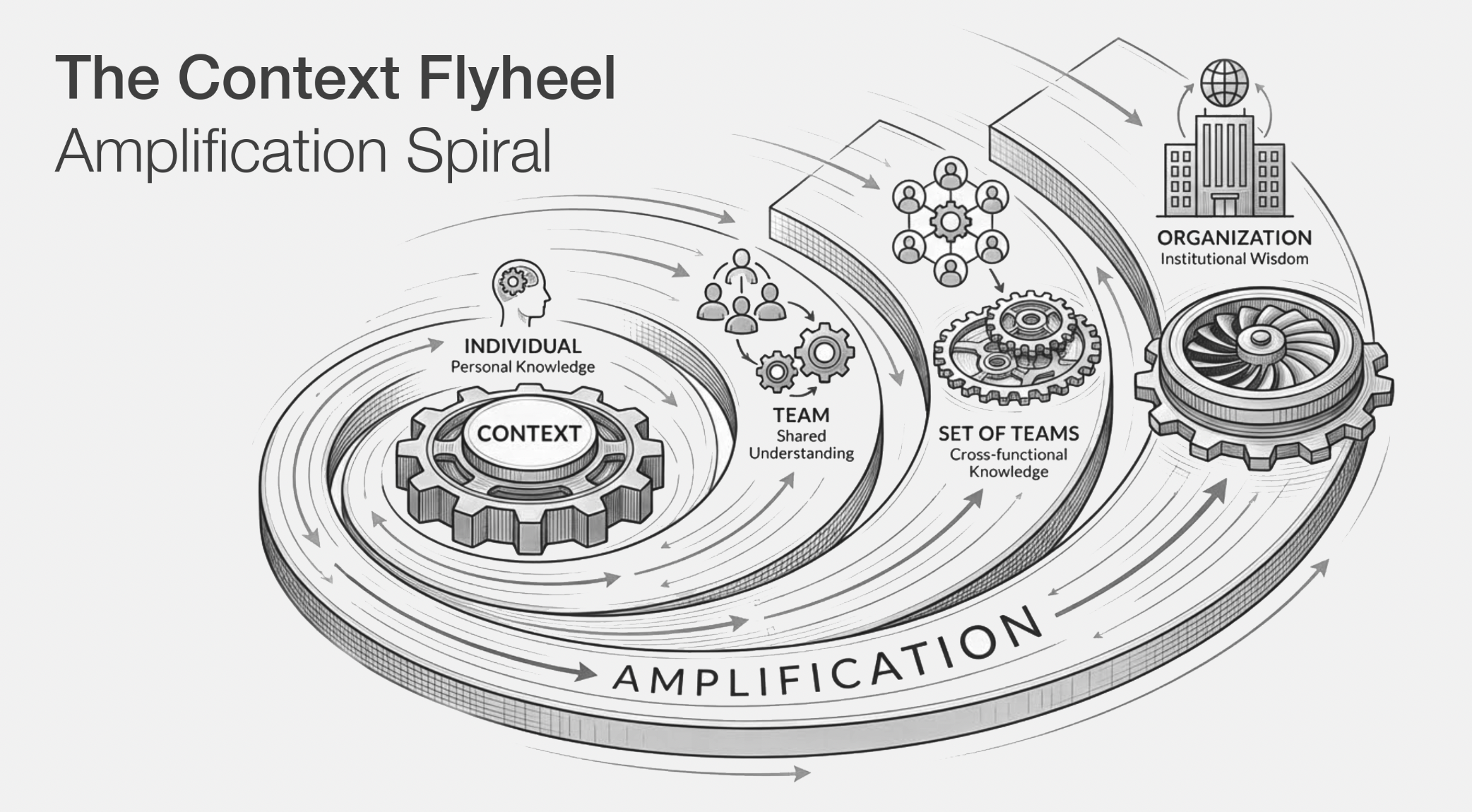

The quality of context you give coding agents is becoming one of the most debated topics in AI development.

A recent study from ETH Zurich found that developer-written context files improved agent task completion by just 4% on average, while LLM-generated ones actually made performance worse by 3%, and both increased costs by over 20%. The study made the rounds, and the hottest take was predictable: stop writing context files altogether.

That's the wrong conclusion. As Simon Maple argued, the problem isn't that context files are useless. It's that unvalidated context is useless.

When you write instructions without a feedback loop, you end up with files full of things the agent would have figured out on its own, mixed with instructions that are actively wrong or misleading. The answer isn't less context. It's tested context.

Anthropic appears to agree. They've just updated their skill-creator skill to include eval creation, running test scenarios through sub-agents that execute, grade, and compare skill performance.

At Tessl, we've long advocated for the importance of measuring & optimizing agent context, so it's encouraging to see Anthropic push in this direction!

What Anthropic shipped: evals in skill-creator

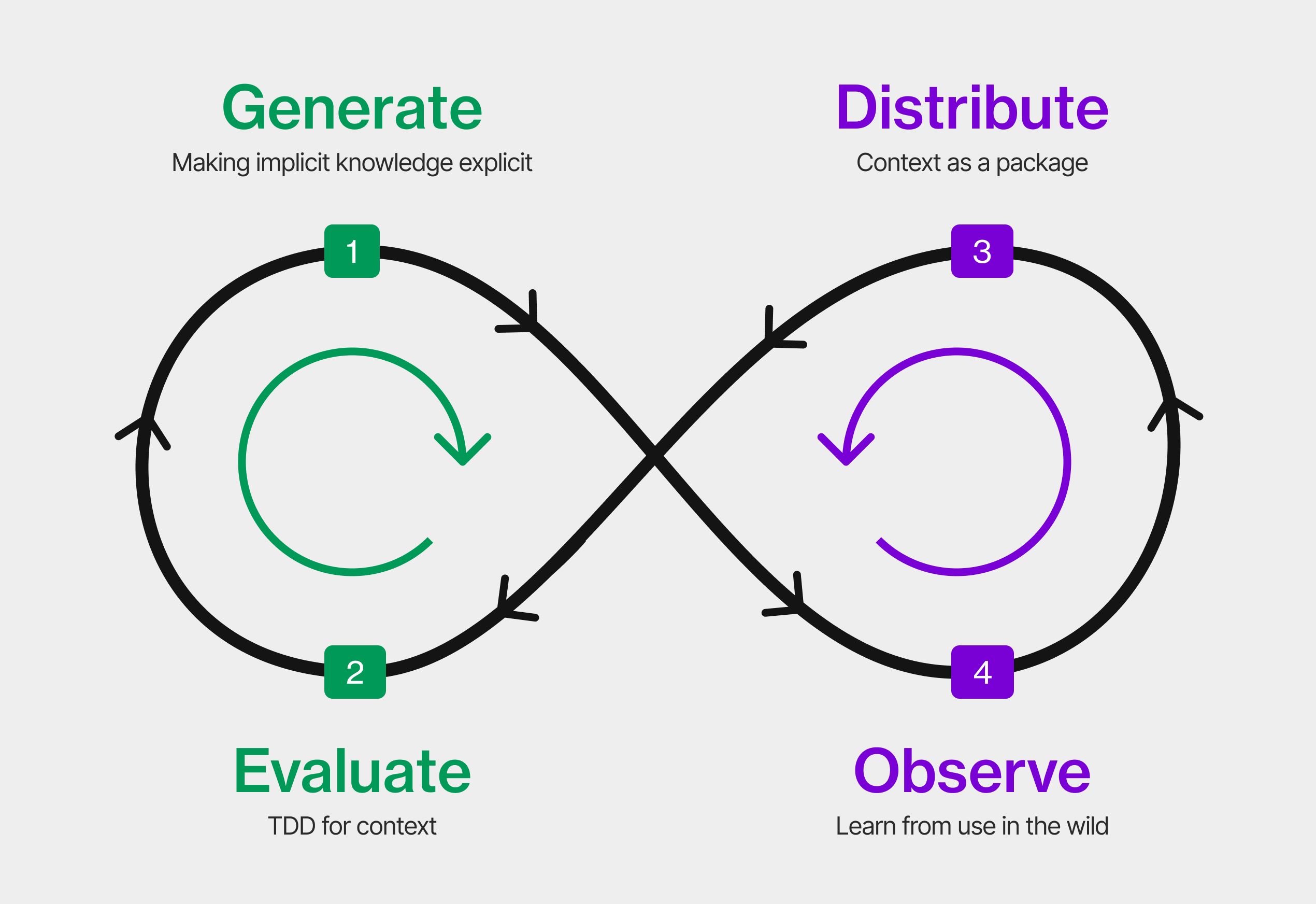

The updated skill-creator now operates in 4 modes: Create, Eval, Improve, and Benchmark. The interesting addition is the eval pipeline, which uses four composable sub-agents working in parallel:

- An executor runs skills against eval prompts.

- A grader evaluates outputs against defined expectations.

- A comparator performs blind A/B comparisons between skill versions

- And an analyzer surfaces patterns that aggregate stats might hide

Below is a snapshot of running the skill-creator within Claude Code upon asking to build a simple JS code reviewer. Each test case is a JSON file that pairs a realistic user prompt with a set of assertions - specific, verifiable checks that the output should pass.

Here's one from our JS code reviewer skill:

{

"eval_id": 2,

"eval_name": "api-handler",

"prompt": "Review this Express handler for me — it processes orders. Any issues?",

"assertions": [

{"id": "no-input-validation", "text": "Flags that req.body items are used without validation", "type": "quality"},

{"id": "foreach-async-inventory", "text": "Flags forEach with async callback for inventory updates (not awaited)", "type": "quality"},

{"id": "loose-equality", "text": "Flags == instead of === for coupon code comparison", "type": "quality"},

{"id": "error-logging", "text": "Flags console.log(err) as inadequate error handling", "type": "quality"},

{"id": "unused-validation", "text": "Notes that validateOrder exists but is never called", "type": "quality"},

{"id": "quantity-zero", "text": "Flags that quantity < 0 should be <= 0", "type": "quality"},

{"id": "structured-output", "text": "Review uses severity levels (critical/warning/suggestion)", "type": "format"}

]

}Once the full eval process runs through, an HTML is rendered displaying both the output, and the benchmarks comparing SKILL vs without SKILL:

In this example, both SKILL vs no SKILL configurations scored 100% on all assertions as test cases here used well-known JS/TS antipatterns that a strong model (Opus 4.6 ) will catch with or without guidance.

This is exactly what the benchmark is designed to surface: when the pass rates are identical, it tells you: make your test cases harder, or focus the skill on areas where the model genuinely needs help.

Measuring skills: what good evals look like

Anthropic's release focuses on task-based testing: define scenarios, run the skill against them, grade whether the output meets your assertions.

This is a great starting point. It gives skill creators a way to measure whether their skill actually improves agent behavior.

The next step is making those results portable - repeatable across versions, models, and teams, and visible to the folks deciding whether to install.

This is exactly what the Tessl Registry is working on!

1️⃣ Cloud-scale evaluation: you can create and execute dozens of scenarios across multiple configurations without being constrained by a local session.

2️⃣ CI/CD integration: evals run automatically whenever you publish a new version, catching regressions before they reach users. A skill that passes today might fail next month when a model updates.

3️⃣ Version-pinned results: eval results are tied to specific published versions, so you know exactly how v1.2.0 performs versus v1.1.0. Did that last edit improve things, or introduce a subtle regression?

4️⃣ Cross-model, cross-agent comparison: a skill that works well with Claude Sonnet 4.5 might behave differently on GPT-5 or Gemini.

5️⃣ Public visibility for creators and users: eval results are surfaced directly on each skill's registry page, so creators can demonstrate that their skill and users can assess quality before installing.

👉 Fire up Claude Code, point it at Tessl’s evaluation docs, and optimize your skill now.

Juxtaposing this with what we’ve seen from Anthropic, you can see the below evaluations scenarios and results we have on our registry:

- Cisco's software-security skill, built on Project CodeGuard, scores

84%overall, and a total1.78ximprovement, nearly doubling the agent's ability to write secure code across23rule categories. - ElevenLabs publishes a text-to-speech skill that scores

93%overall on the Registry, with a94%review score and a1.32ximprovement in agent success rate when the skill is active. That means coding agents are 32% more likely to use the ElevenLabs API correctly with the skill than without it. - Hugging Face's tool-builder skill scores

81%overall with a1.63ximprovement in correct API usage when building tools against the Hugging Face API.

These numbers matter because they turn a subjective question ("is this skill good?") into an empirical one. A dev browsing the Registry can see, before installing anything, whether a skill has been evaluated and how much it actually helps.

What this means for developers, and the question for skill creators

2 things stand out in my opinion.

First, the "skills as software" idea is gaining real traction. When the Tessl skills package manager launched in January, the argument that skills need versioning, testing, distribution, and lifecycle management felt forward-looking to some. Anthropic embedding eval creation into their skill-creator, barely a month later, is strong validation. When the model provider starts building the testing infrastructure, it's a good sign that the underlying premise is sound.

Second, the bar for published skills is rising. Publishing a skill to any repo is self-serve with no quality gate. As eval tooling matures, devs will start filtering for evaluated skills the same way they filter for tested npm packages. If you're publishing skills, adding evals now puts you ahead of where things are heading.

If you're publishing skills today, or just sharing them with your team, ask yourself: do your skills have tests? do they have defined scenarios? do they have way to detect when they stop working?

If you want to start evaluating your skills, you can try Anthropic's skill-creator, allowing you to define test cases and run them locally in Claude Code.

For a more integrated workflow, spin up Claude Code, point it at Tessl’s evaluation docs, and optimize your skill now!