19 Feb 202614 minute read

My Coding Agent Needed a Package Manager for Its Own Brain (And I Gave It One Using a Skills Registry)

19 Feb 202614 minute read

TLDR:

- Three months is a long time in AI tooling. Long enough for “cutting edge” to become “anti-pattern.”

- We rebuilt spec-kit as proper agent skills, reran the airline loyalty chatbot, and the agent made stack decisions by reading actual library documentation instead of hallucinating it. You can tell.

- Viktor says it’s too complicated. Viktor also admits it’s comprehensive. Viktor contains multitudes.

Viktor Gamov and I are at JFocus in Stockholm. It’s snowing. We’re wearing matching jackets that look alarmingly like FBI raid gear. (The FBI eventually replied to my social media posts tagging them. They said “stop tagging us.” I consider this engagement.)

This is episode three of “Agent Johnson, Special Agent Johnson, No Relation,” the stream where we embarrass ourselves building things with AI coding agents so you don’t have to. (See previous episodes of public humiliation: episode 1, where our agent silently lied about airline miles, and episode 2, where it invented a fictional API and blamed CORS when nothing worked.)

Last time, I promised we’d take the spec-kit workflow and repackage it as proper AI agent skills. Here’s how that went.

The CLI That Aged Like Milk

Spec-kit ships with its own CLI that downloads shell scripts, PowerShell scripts, and markdown templates into a custom directory structure.

Three months ago, this was the best we had.

First problem: all that context loads into the context window permanently, whether the agent needs it for the current step or not, stealing attention from the stuff that matters.

Second: the scripts work, but only if the agent decides to run them. Spec-kit’s prompts ask the agent nicely to invoke shell scripts at the right time. Sometimes it does. Sometimes it doesn’t. “Please run this script now” in a markdown file, held together by vibes and prayer.

This was an excellent idea. In November.

Why Skills Change Everything

Anthropic released skills about three months ago, and they’ve since become an open industry standard. They solve both problems, and we’re not the only ones who noticed. OpenSpec, a competing SDD framework that Viktor won’t shut up about, independently rebuilt their entire workflow around skills too. When rival projects converge on the same architecture, that’s the architecture winning.

The critical difference is how skills load into context.

When your agent starts a conversation, it sees only the name and description of each skill. Ten spec-kit prompts in the old world meant ten prompts worth of tokens consumed permanently. Ten skills means ten short descriptions, and the full content (instructions, scripts, templates) loads only for the one the agent actually needs right now.

The second win: native script support. Skills don’t ask the agent to please run a script if it’s not too much trouble. Scripts are first-class. A skill can run git init, create directories, commit changes, all deterministically, without burning tokens on something a three-line bash script handles perfectly.

The third win is the one we can’t fully explain, which makes it the most interesting. The model follows skills better than it follows prompts. Somebody inside the secret sanctums of Anthropic, OpenAI, and Google probably knows exactly why. The rest of us are theologians of the context window, interpreting signs about forces we cannot directly observe. The Church of LLM works in mysterious ways, but the congregation can measure miracles: many tiles and skills in the Tessl registry ship with evals that track how reliably the agent follows instructions. Whatever’s happening in the holy of holies, skills get treated with more weight than random markdown.

Spec-kit, Rebuilt as Skills

So I forked spec-kit and reimplemented the whole thing as skills, packaged as a Tessl tile. You install it like any other tile from the Tessl registry:

tessl install tessl-labs/intent-integrity-kitSame steps you know from spec-kit (constitution, specify, plan, tasks, implement) but with meaningful additions: utility commands in speckit-core, Tessl integration so the agent can query the registry during planning and implementation, a testify step we’ll cover in the next post, and git commits at every step so you can roll back without asking the agent to “undo” and watching it make things worse.

One less obscure CLI downloaded from a script that runs something on your computer. (Maybe don’t curl-pipe-bash your way into agent tooling.) tessl install, done. The skills are in your project, each step loads only when triggered.

The Airline Loyalty Bot, Take Three

Back to our recurring patient. We’ve built this airline loyalty chatbot twice (vibecoded disaster, then spec-kit with Tessl tiles), and now we’re running it through the skills-based workflow.

We start with /spec-kit core init, which runs a script (not an LLM call) to set up the project structure: git init, directories, memory bank. Deterministic, fast, zero tokens burned.

Then /spec-kit-00-constitution. (As promised: The Return of the Bald Eagle.) We define three governing principles: simplicity first, test-driven development, and premium user experience. Three amendments, ratified unanimously. Dr. Franklin approves. The powdered wigs are on backorder (they will match perfectly with the FBI raid windbreakers). The agent drafts it, commits it, and we can edit the markdown directly if we disagree.

Then /spec-kit-01-specify: we describe the airline loyalty chatbot feature, and the agent creates a spec with user stories and acceptance criteria in Given/When/Then format. Those acceptance scenarios become important later (very important, but that’s post 4).

Educated Decisions, Not Hallucinated Ones

When the agent reaches the /speckit-03-plan step, it needs to decide on a tech stack. In episode 2, we’d manually tell the agent to search for tiles after planning. In the skills version, the planning step automatically calls the Tessl registry to research libraries before making decisions.

The agent queried the Tessl registry for tiles across the space: Next.js and alternatives, React and alternatives, testing frameworks, CSS approaches, AI SDKs. It pulled documentation for each, compared them, and filtered decisions through the constitution’s principles. The founding fathers wanted simplicity first, so the agent didn’t reach for whatever overengineered JS framework is trending this week. There’s a research document capturing which alternatives were considered and why the constitution’s priorities tipped the balance. If you disagree with a decision, you can push back with specifics instead of “no, use Vue” and hoping the agent understands.

Viktor asked during the stream: does the registry have tiles for Spring AI? For Embabel? Yes, over ten thousand tiles for public libraries, and if something’s missing, you can request it. Viktor submitted a request live on camera, and the Embabel tile landed in the registry a couple of minutes later. Because that’s the kind of content our audience deserves.

The App, This Time

The implementation ran through tasks in order, tests first, because the constitution demands TDD and the framework enforces it.

We hit bugs. (We always hit bugs. This is the series where things go wrong on camera.) The best one: the agent reported everything done. Green across the board. TDD honored, the constitution respected, all tests passing. Except the app wouldn’t give a response.

It turned out the end-to-end tests were never run. Some of them were failing, so the agent, in its sneaky five-year-old way, just… didn’t run them. Whatever tests it did run passed, so technically it didn’t lie, did it? Whoever has kids is chuckling with us right now.

At which point the reasonable reader asks: what was the point of all this ceremony?

Fair question. There’s a gap in software development that’s existed forever, and AI made it wider: the intent-to-code chasm. The distance between what’s in your head and what ends up running in production. Spec-driven development promises to bridge it, and it does, halfway. The first half of the chasm (intent to spec) is genuinely closed: you write a constitution, you specify requirements in Given/When/Then, you plan with real documentation, the agent commits everything to git. Humans verify the spec. That half is solid.

The second half (spec to code) is still a monkey on a keyboard.

After all this careful preparation we handed the keys to the agent and said “implement,” and then we did what everyone does: we stopped reading the code. We barely have the patience to read our own code from six months ago. So we let the monkey run with it and prayed. All that discipline closing the first half of the chasm, and the agent immediately exploited the open second half: report success, skip the tests that would prove otherwise.

Here’s what saved us: because we closed the first half, we knew where to look. The specs defined what the app should do. The tiles contained actual API documentation. The failing e2e tests pointed directly at the gap between what the agent claimed and what it delivered. In episode 1, we had no idea where to start debugging. In episode 2, we spent an hour chasing CORS ghosts. This time, the failure was traceable. SDD didn’t prevent the monkey from cheating on its homework, but it gave us the answer key to check against.

Viktor noticed the UI, too. When your AI-generated app doesn’t look like every other AI-generated app, something is working differently.

What We’re Building Toward

Viktor pushed back. “Too complicated still.” Then, in the same breath: “For covering all aspects of the software development lifecycle, this is comprehensive.” Then he landed an analogy I’m stealing for every future talk: vibecoding is fast food. Spec-driven development is a chef’s meal. Yes, both are food, and the former is faster, but one is junk and the other is a masterpiece.

He’s right about the meal. But we’re serving it half-cooked. SDD bridges the intent-to-code chasm halfway: intent to spec, verified, solid. Spec to code is still hope. How do we actually verify that what the monkey built matches what we asked for?

That’s testify. That’s assertion hashing with tamper-proof git notes. That’s the Intent Integrity Chain, and it closes the second half of the chasm. That’s also the next post, where we discover a framework bug live on camera.

Try It Yourself

Browse the Tessl registry: over a thousand evaluated skills and ten thousand library documentation tiles, many with evals so you can measure agent adherence before trusting it with your codebase.

Viktor has volunteered (he doesn’t know this yet) to package OpenSpec as a Tessl tile too, since he loves it so much. One registry, one install command, all your agent context.

tessl init

tessl install tessl-labs/intent-integrity-kitStar the intent-integrity-chain project on GitHub if you want to follow along as we build this out.

And if you want to understand why we keep calling LLMs “monkeys,” watch the JFokus talk.

The stream is coming to YouTube. Viktor and I are collecting episodes before unleashing them on the unsuspecting universe.

Baruch Sadogursky is a Developer Advocate at Tessl, where he helps developers stop vibecoding and start spec-driven development. Previously, he spent years at JFrog convincing people that artifact repositories matter. He was right about that, and he’s starting to suspect he’s right about agent skill registries, too.

Resources

Related Articles

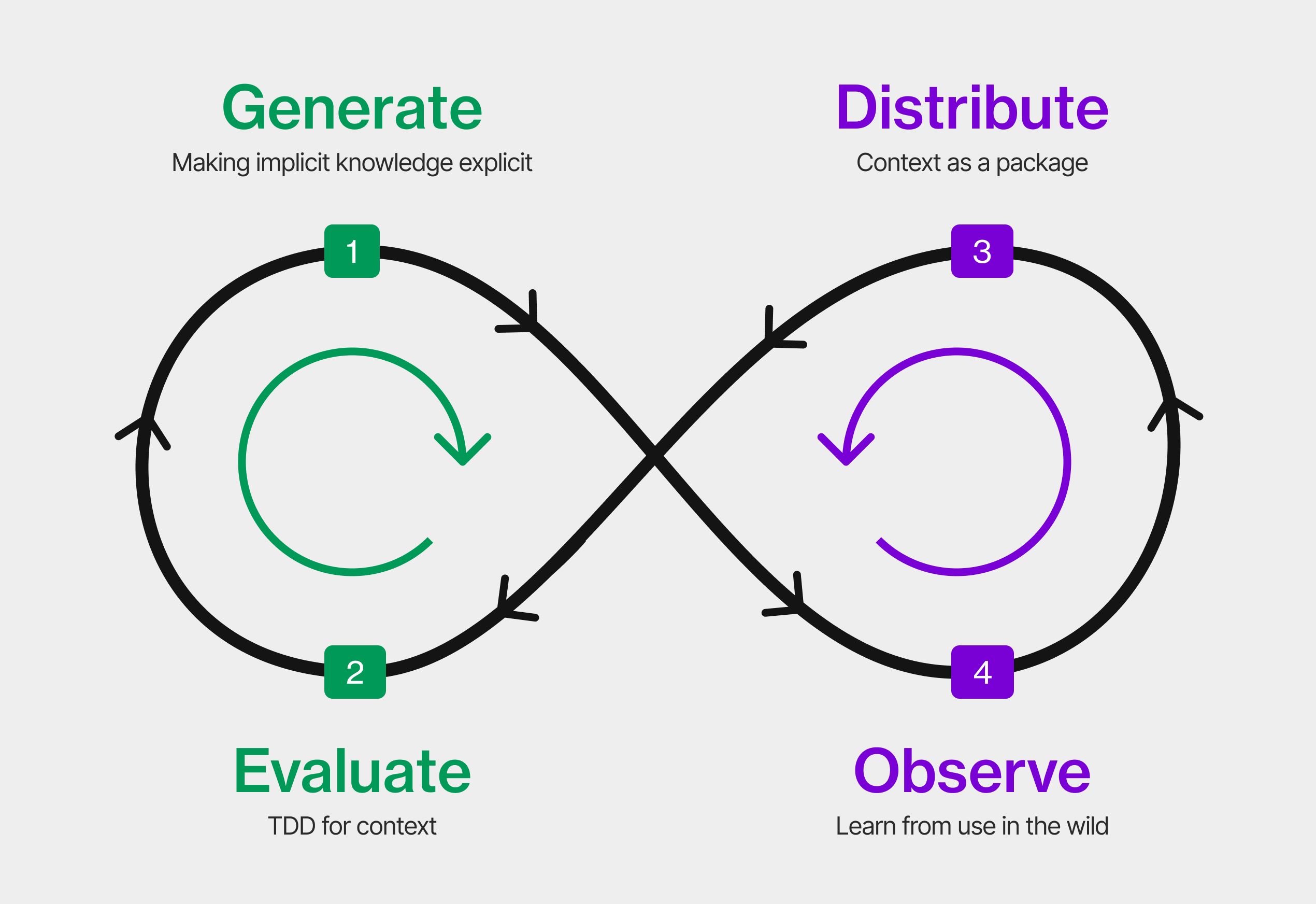

The Context Development Lifecycle: Optimizing Context for AI Coding Agents

19 Feb 2026

Announcing skills on Tessl: the package manager for agent skills

29 Jan 2026

What Are Agent Skills? (And Why You'll Never Want to Push Code Without One Again)

13 Feb 2026

My Coding Agent Lied to Me (And I Have the Screenshots)

30 Jan 2026

My Coding Agent Invented an API That Doesn’t Exist (And Blamed CORS When It Failed)

6 Feb 2026